Verifiability, System Drift, and the Future of AI Automation: Reflections on Andrej Karpathy’s Insights

Some thoughts on verifiability in automation, building systems that last, and where AI technology might actually be headed

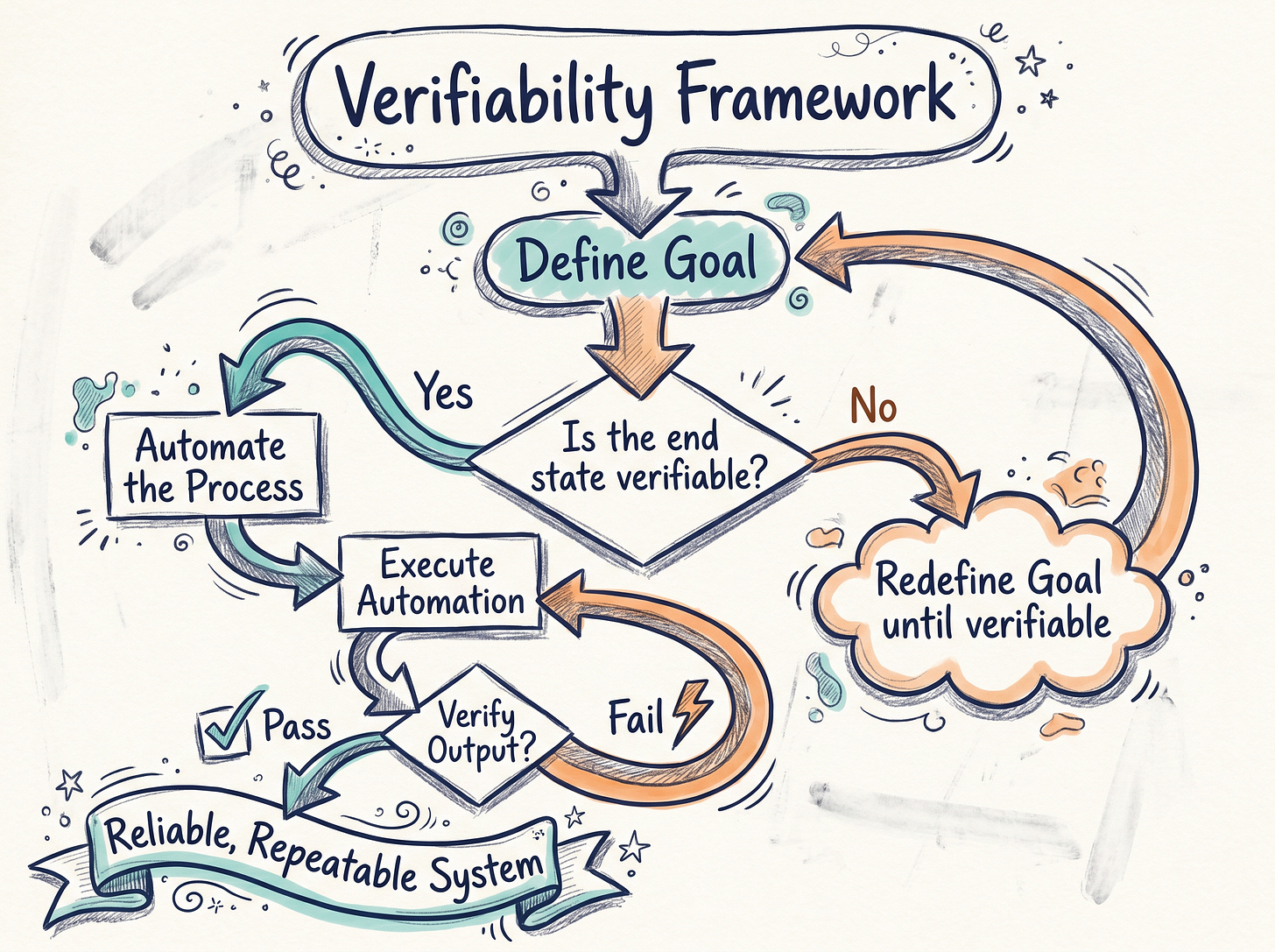

I recently watched an interview with Andrej Karpathy, and one thing he said really stuck with me. When asked if everything could be automated, he replied, “If it could be verified, yes, it could.” That idea has been on my mind ever since.

Verifiability means having a clear, repeatable goal or outcome you can check against. If you can confirm the system did exactly what it was supposed to, automation feels much more sensible: you’re not just crossing your fingers and hoping for the best.

Thinking back on the automations I’ve built myself, this makes perfect sense. Most of the setups I rely on have clear checkpoints or results that are easy to verify. For instance, I have an automation that posts five news items daily to Twitter and LinkedIn. Either those posts go out, or they don’t. Pretty straightforward.

Being able to check outputs throughout the day really helps keep things running smoothly and reliably.

Building Systems: Why Planning Alone Isn’t Enough

Andrej also talked about some core pieces when it comes to building solid systems. Things like setting a good baseline, auditing capabilities, and structuring the architecture right. I’ve felt that myself.

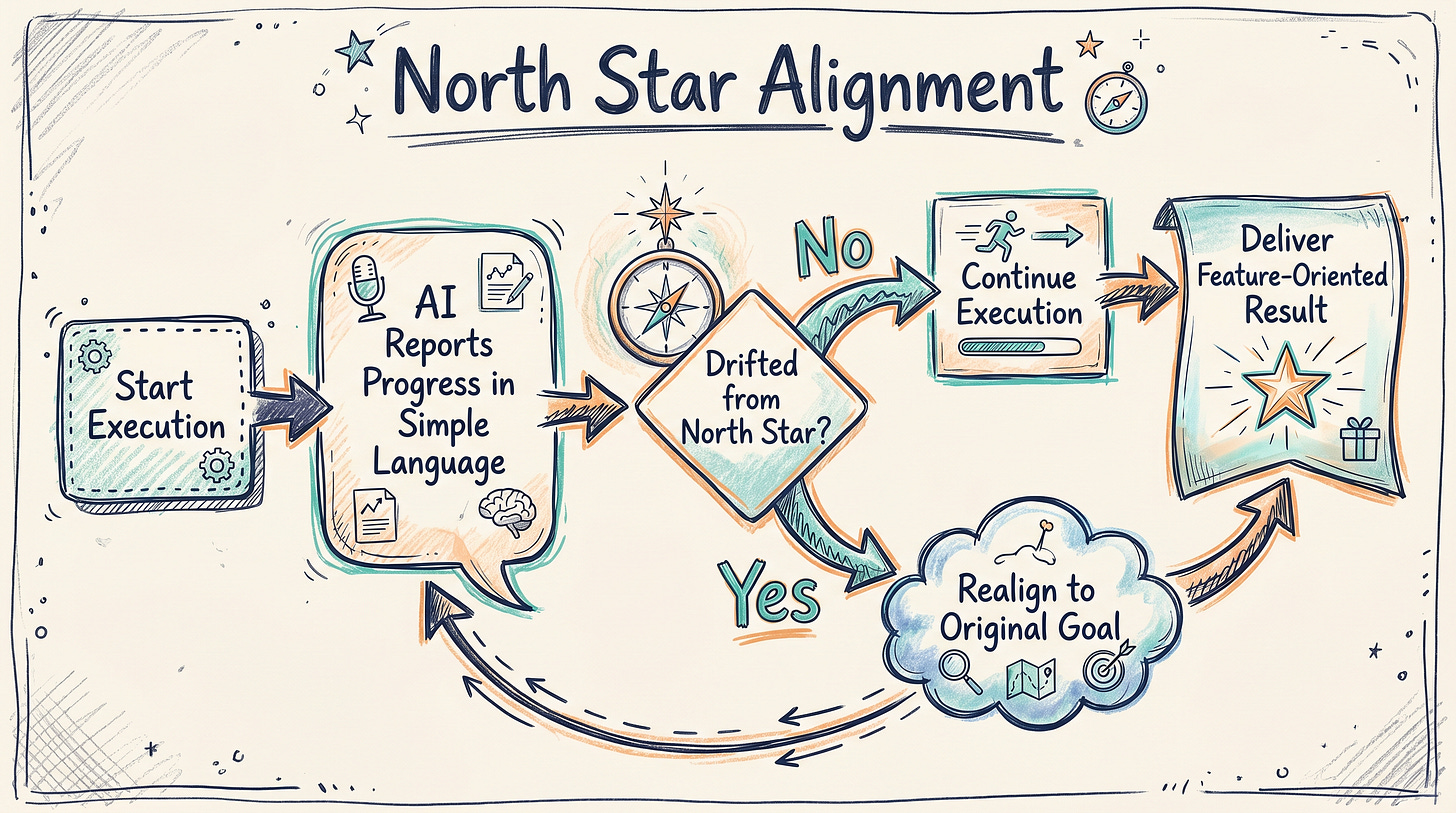

I’ve spent weeks planning big systems with thousands of lines of code, but if you don’t stay focused during execution, the whole thing can drift off course. Suddenly the outputs are meaningless and don’t align with what you actually wanted.

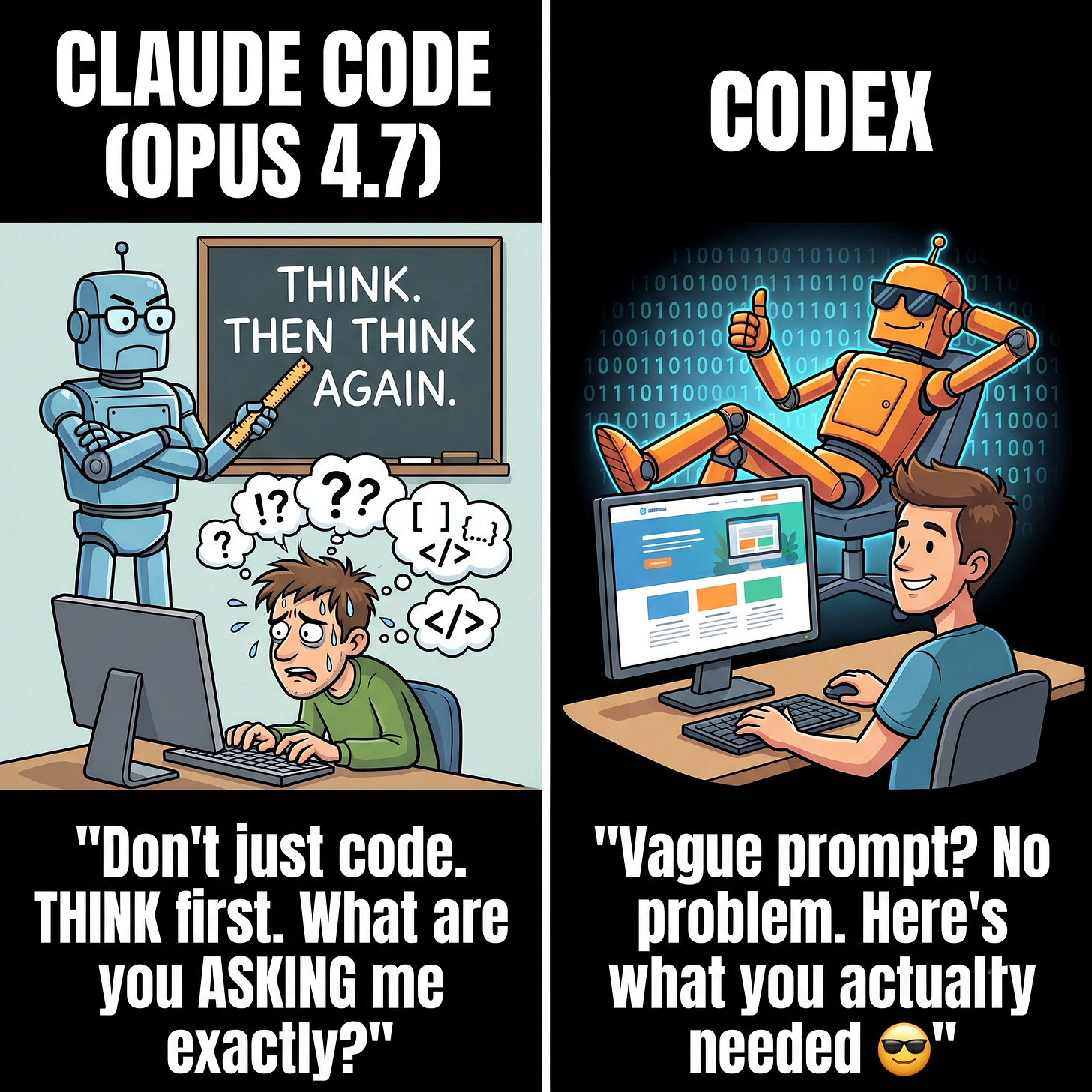

Personally, I, surprisingly, started to prefer Codex over Claude Code for these reasons. Codex tends to stay on track with clear goals and gives straightforward outcomes. With Claude Code, I have to be more deliberate about how I use it to avoid going off in the weeds.

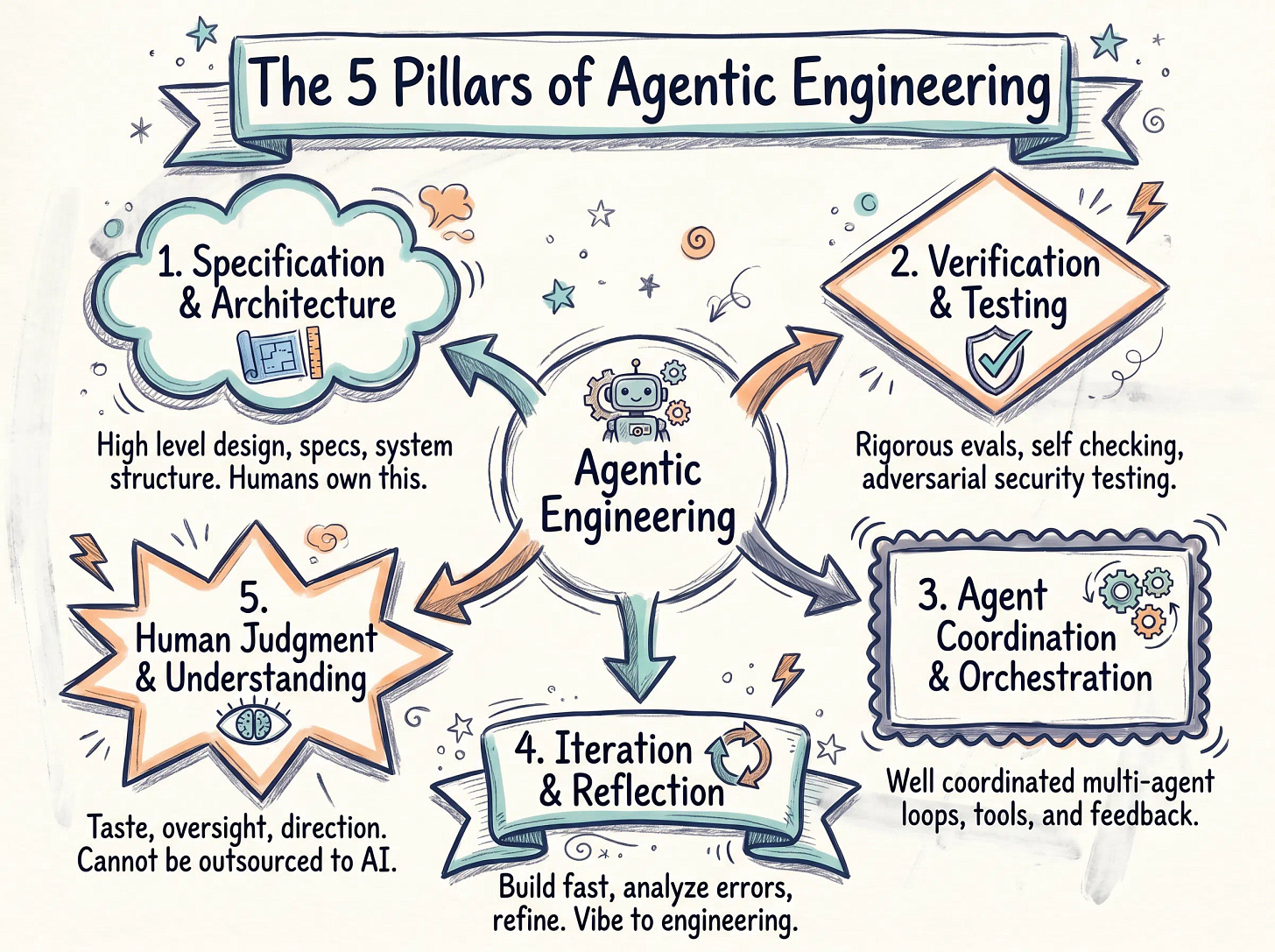

In this context, Andrej Karpathy also mentioned the concept of Agentic engineering, which really preserves the ceiling on system quality. It ensures reliability, security, scalability, and robustness by carefully managing how the system’s components interact. Agents, by nature, are ‘spiky’ and stochastic. They are extremely powerful in some areas but less reliable in others. So you need strong engineering discipline to coordinate these agents without sacrificing overall quality.

Karpathy didn’t explicitly list a set of five elements but his discussion highlights key pillars of Agentic engineering that are worth mentioning here:

1.Specification and Architecture: This is about the high level design, detailed specs, and the overall system structure. Humans drive this part.

2.Verification and Testing: You want rigorous evaluations, self checking mechanisms, and security testing. Think of adversarial agents trying to break your system to make sure it holds up.

3.Agent Coordination and Orchestration: Since your agents are spiky, you need a well coordinated system managing multiple agents collaborating, using various tools, and looping feedback.

4.Iteration and Reflection: Build fast, analyze errors, learn from them, and refine your system. This embodies the transition from just experimenting to solid engineering.

5.Human Judgment and Understanding: This means taste, oversight, fundamental knowledge, and setting the right direction. Things that can’t be outsourced to AI.

Together, these pillars take us beyond simple prompting toward engineered, verifiable workflows that keep quality high.

Keeping Things Clear: Reports and the “North Star” Approach

To combat system drift, I make sure every report translates what the system has done into plain language. The big test, the “North Star”, is always checking if the system stuck to its original goal. This perspective prioritizes features and user experience rather than just technical specs.

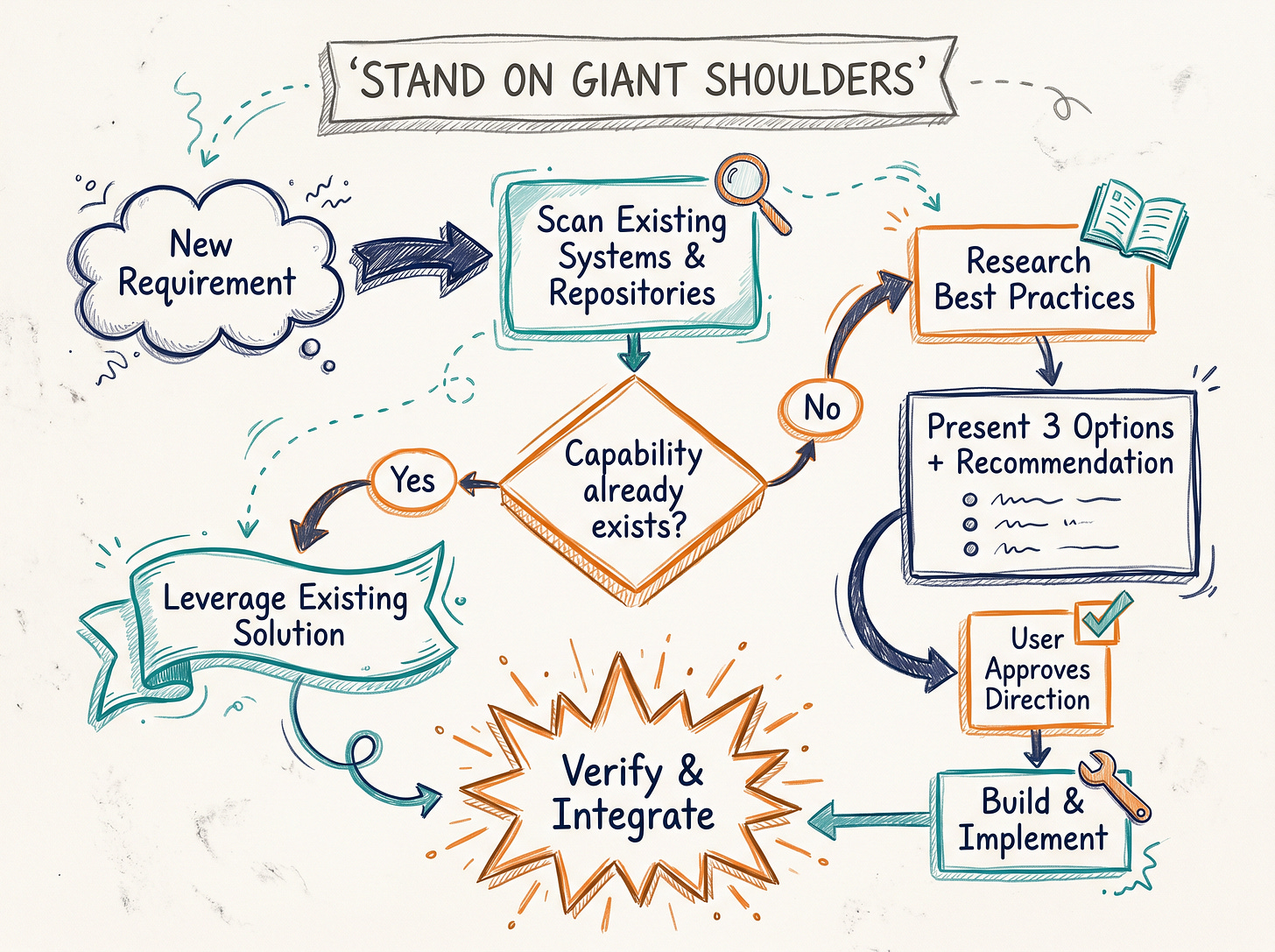

When I work with Claude Code, I instruct it to focus on what needs doing rather than how to do it. For the how, I have it research best practices, dig through repositories, and come back with three solid options and recommendations. Then it’s up to me to review and approve before moving on.

We jokingly call this the “Stand on Giant Shoulders” rule, meaning we leverage existing knowledge instead of reinventing the wheel.

Here’s one situation where things got really interesting: I was trying to automate extracting info from messy inputs like PDFs, screenshots, voice messages, and photos. The end goal was to fill out a web form automatically using browser automation.

I built this inside a Telegram bot thinking I’d need special protocols, like Aogram. We already had a system like this, but I hadn’t dug into what the Hermes agent we were using was capable of. Turns out Hermes supports multiple data types out of the box, including PDFs directly via Telegram.

After finishing my custom solution, I realized Hermes could have handled the entire workflow natively! That was a big wake up call about the importance of truly understanding existing tools before jumping into building something new. Claude Code sometimes forgets this and rushes straight into new work instead of exploring what’s already available.

AI Is Changing, But Not Always for the Better

Another observation: the new Opus 4.7 update made the AI less flexible. The model now strictly follows instructions without the intuitive leaps or extra smarts that Codex has. While this change probably aims at improving safety and security, it also takes away the AI’s ability to read between the lines or guess what you might want from fuzzy prompts.

What I’m hoping for in the future is AI that can work with minimal input, ask a few clarifying questions if needed, and deliver solid results. Better yet, AI that sometimes anticipates your needs before you’ve fully spelled them out. That kind of proactive, thoughtful, and resourceful intelligence is where things should be headed.

There’s a meme floating around about Claude Code suggesting it just wants humans to think “don’t withdraw, don’t retard.” It’s poking fun at how cautious or limited Claude Code can feel. I worry that if Claude Code can’t provide developers the scaffolding they really need in their workflows, other players will jump in and take that crown.

What’s Next on My Radar

Right now, there’s a lot of buzz about building a Agentic OS that’s model agnostic. The idea is to create a system that can plug into different AI engines without needing a full rewrite or rethink every time. This is huge for companies because switching AI models today usually means costly, extensive rearchitecting. I’m, too, keen on focusing on creating model agnostic systems and sharpening automation practices with these lessons in mind. The dream is to build resilient, adaptable systems that combine verifiability with smart, flexible AI helpers.

To wrap up, verifiability is absolutely key to building solid automation. System drift reminds us to keep our goals front and center while continuously checking our work. And with AI evolving so rapidly, staying grounded while pushing forward thoughtfully is probably the best way to truly harness its potential.

Till then, cheers!

Previous column articles can be found here: