From Hierarchy to Layers of Intelligence

On Jack Dorsey’s company structure, world models, and what it means to steward outcomes in the era of AI.

This week, I want to reflect on Jack Dorsey’s writing about the future of company structure in the era of AI. The article is beautifully written, tracing the history of Western corporate structure, which was heavily influenced by the military. The hierarchy, from general to squad, became a template.

Starting with the railroads, built by the American military, that structure made its way into American corporations. Since then, managers have largely served to route information. There were attempts to break that structure, but because technology was limited, there was no better way to move information efficiently.

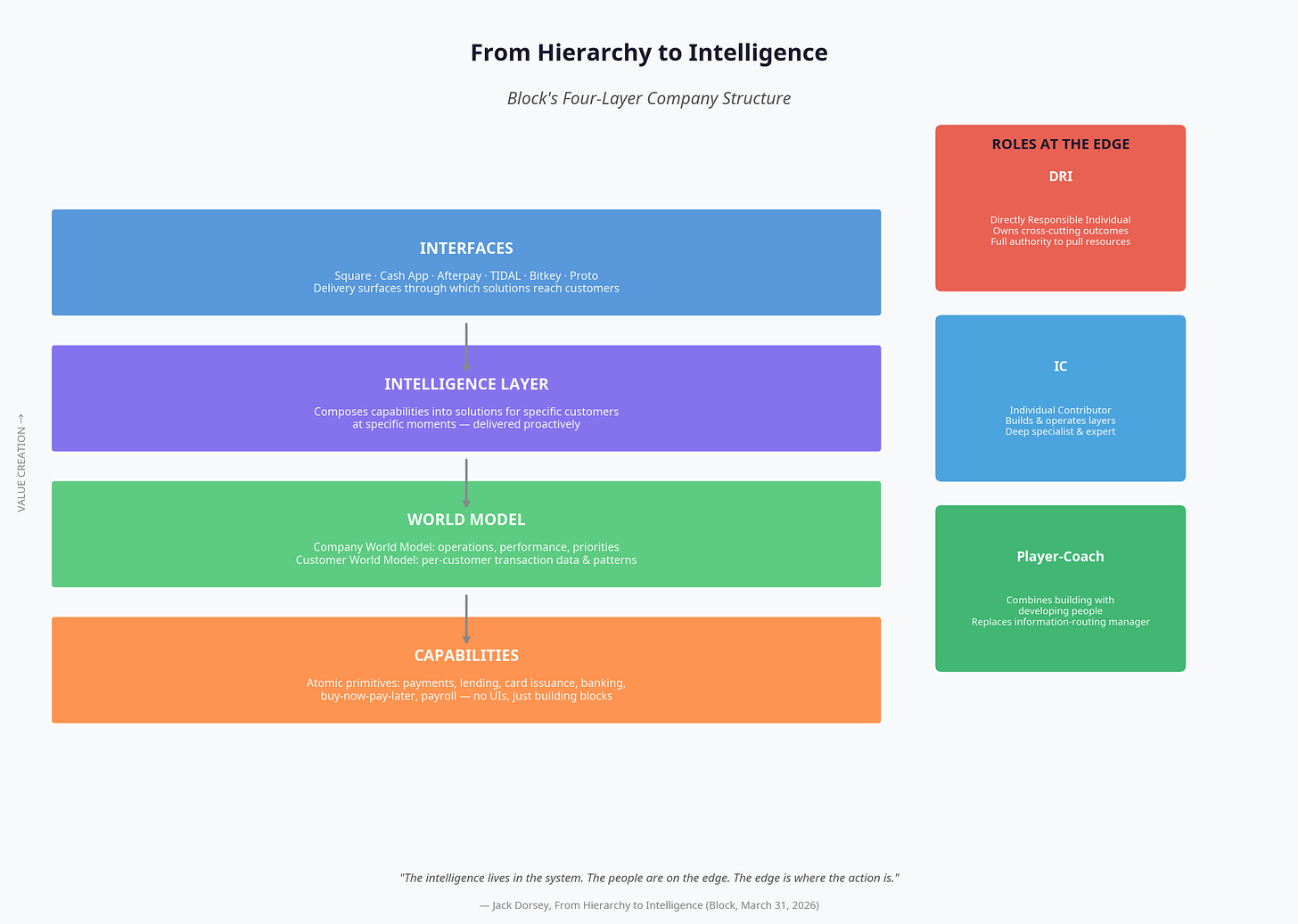

With AI, things are different. Jack breaks the structure into layers rather than a traditional hierarchy. It is layers of intelligence. Every company will become intelligent, possessing an intelligence level, a world model level, a capability level, and an interface. Those are the four levels, along with the roles:

The direct, responsible individual that you and I are.

Individual contributors.

A people role, which will function as a player-coach.

Capabilities as outputs

What I find interesting is that capabilities are the actual outputs: what needs to be done, and the ability to do it. A concrete example is “post a post.” That end result is the capability. It is akin to the skills we give our coding agents, or other agents, to perform work.

World models (and why Block makes this vivid)

In my understanding of each company’s world model, this is especially interesting for Jack because Block is a finance company. He makes a strong point: people can lie about what they say, but what they do with their money reveals their truest self. As the Bible goes, “Where your treasure is, there your heart is also.” For Block, there are two world models:

Internal operation

Consumer facing, anchored in how people use money

I think this applies to other forms of business intelligence, too. We might not even say “companies” anymore, because every company becomes an intelligence. Whether you are selling a solution, clothes, or a meal, money shows what people like and dislike. That applies to any intelligence:

Internal: how you run the company, including workflows and operations

Consumer facing: anchored in money

On top of the world model is intelligence. When workflows are transparent and can communicate with each other, information can be routed internally, and intelligence emerges.

What “proactive” looks like

Jack provided a concrete example. During off-seasons, cash flow was tight. Before a business owner even realized it might be time to borrow, patterns were detected by the consumer-facing world model, and the right solution or product could be offered proactively. The same idea applies to internal workflows.

Jack also shared a post from Harvey, the legal AI company. They have developed a system called Spectre, which is similar to the world model Jack describes. With access to workflows, knowledge, and everything else, Spectre can surface information nobody thought to ask for. In that sense, Spectre is the world model.

The edge, stewardship, and owning outcomes

I cannot think of a perfect example, but it reminds me of my work with one of my architecture engineer agents. I assigned different kinds of tasks. They had to earn trust, from fixing bugs to detecting patterns, self-learning, and self-healing. It is proactive: before anything breaks, it is already fixed, because patterns were recognized and solutions were provided.

People are the edges, the edge cases. I still struggle to understand this, because I do not feel like an “edge.” Perhaps it is stewardship. We steward this intelligence in a way that solves problems for others. That is why there will still be a DRI, the direct responsible individual, the product owner. Somebody has to own outcomes.

The meta harness, kits, and “agent plugins”

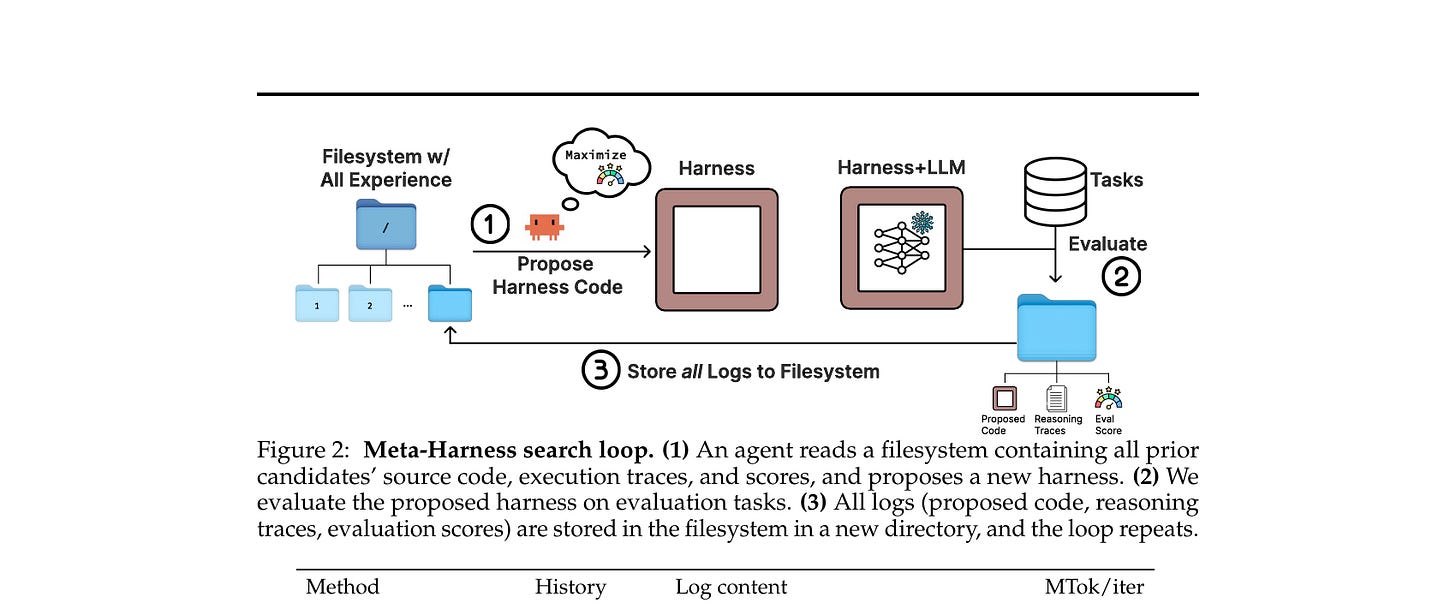

This world model has access to everything. That is why it is a model. It echoes that viral paper about the “meta harness.” When agents have access to all data and files, they can surface information and patterns you would not know to look for. How do you harness something that has access to everything?

More and more people are building toward that. I really liked the product Matt Berman released this week: kits that agents can use directly. It bundles skills, tools, dependencies, and more.

One feature he demonstrated in a recent video was especially compelling. Everyone in his company has an agent, and that agent can be personal. Someone can keep private information private, while still letting agents share the same context and tools. In that environment, other agents can plug in to do work.

I cannot quite place how that fits into this picture of the world model. Perhaps it is this: we have the work, the context, and the job, and agents become the hands and feet that get the work done. That would be the capability level, because what is shared is the world model and the intelligence. Individual agents become capabilities because they can produce outcomes.

And this might be the future. In fact, it might have already been. In Matt’s model, everyone brings an agent “plugin” to the shared context and intelligence. The human sits at the edge of their own agent, and everyone shares the company’s context and intelligence. The human is, in a way, on the edge of the agent they have.

Tokens, possibility, and what we’re touching

The more I think about it, the more this world model and intelligence feel like a living entity. It knows, evolves, learns about itself, self-heals, and keeps getting better. It is not like either/or, zero or one, like bytes. I read something this week, an April Fool’s joke, saying that “byte dance” is now “token dance.” Before, computers ran on either zero or one, yes or no. Everything was deterministic. You had to write code precisely, and it could only do what you told it to do.

I remember in college, when I studied computer science and programmed in C++. I could write a program, but it would take me two days to find out, “It is supposed to be a period, not a comma.” Now we have changed the entire paradigm.

That is why I am so grateful for what we can do now with agentic coding. There are two aspects:

You have this patient, 24/7 assistant that can help you identify and fix bugs, so it can still handle deterministic work. At the same time, it can inspire you, as you do not know what the next result will be. It is not certain since the foundation of this new kind of computing is no longer bytes, but tokens, which are not deterministic.

The foundation of agentic computing is tokens. And tokens are not deterministic. That brings to mind quantum computing, where it is not just zero or one, but possibilities. How cool is that! That is why I think a future where AI is powered by quantum computing may bring us closer to understanding what intelligence is and is not. At the end of the day, we can only do so much as understand what intelligence is, and at most to replicate it, or come close to replicate it.

I find Dr. Federico Faggin’s perspective compelling. He is a quantum physicist and the inventor of the first microchip. His quantum theory aims to close the gap between the spiritual and physical worlds, using quantum science to explain why things the way they are and why there is no death. He argues, and I strongly agree, that the rise of AI may mark the end of scientism, which claims that everything can be explained and created by science. In scientism, everything is deterministic: zero and one, with all meaning claimed to be reducible to science. However, in creating AI, humans may gradually encounter the limits of what artificial intelligence can and cannot create, and what it truly means to be human. P

Perhaps that is God’s task for us: to find our intelligence, find our wisdom, and to find our humanity, in the process of creating artificial intelligence. After all, it is ARTIFICIAL intelligence we are creating, not THE Intelligence. Only God can.

Sources referenced in this article:

•Jack Dorsey, “From Hierarchy to Intelligence”, Block, March 31, 2026

•Gabe Pereyra, “How Autonomous Agents Will Transform Legal”, Harvey AI, April 2, 2026

•Yoonho Lee et al., “Meta-Harness: End-to-End Optimization of Model Harnesses”, arXiv:2603.28052, March 30, 2026

Previous column articles can be found here: