Daily TEA – Voice, Chips, and AI Agents Shaping What’s Next

OpenClaw agents, model-switching, voice interfaces, Taiwan chips, memory prices

Hello, dear TEA-mates — here’s what you need to know today.

1.🤖 OpenClaw Deep Dive Maps the AI Agent Threshold Effect

Leonis Capital’s latest deep dive dissects OpenClaw (formerly Clawdbot/Moltbot) as a case study in the “AI threshold effect,” arguing that impressive demos are not enough for agents to become production-grade tools. The piece explains how OpenClaw’s Moltbook incident showed the gap between magical user-facing capabilities and an underdeveloped security and trust model. It outlines requirements for robust agent systems, including adversarial testing, clear failure mode documentation, and explicit trust boundaries instead of relying on hype-driven adoption. The analysis positions OpenClaw as a pivotal example of where current AI agents break under real-world risk and why the next wave must be built with security and reliability first. (Read More)

🫖 TEA For Thought: A remarkably thorough breakdown of OpenClaw’s architecture and failure modes that sets a new bar for how we analyze AI agents.

2.🧩 Enterprise AI Users Embrace Model-Switching Over Single-Model Bets

Perplexity’s enterprise blog highlights a shift in how companies use AI: instead of standardizing on a single model, employees increasingly treat models as a menu, switching between them based on task, speed, and cost. The piece notes that this behavior is emerging as model performance changes rapidly, making flexibility and access to multiple options more valuable than locking into “the best” model at a single moment in time. Enterprise usage data shows workers mixing frontier models, smaller specialized models, and internal options within the same workflow to optimize outcomes. The article frames model-switching as a durable pattern that will shape future enterprise AI platforms and procurement strategies. (Read More)

🫖 TEA For Thought: When the landscape shifts this quickly, having access to every viable model matters more than betting on today’s top performer.

3.🎙️ ElevenLabs Bets That Voice Will Be AI’s Next Interface

ElevenLabs co-founder and CEO Mati Staniszewski told TechCrunch that voice is becoming the next major interface for AI as models move beyond text and screens into more natural, conversational interactions. He said the company’s latest voice models now combine expressive speech with large language model reasoning, enabling more fluid control of devices and services. That vision underpins ElevenLabs’ recent 500 million dollar fundraise at an 11 billion dollar valuation and aligns with pushes from OpenAI, Google, and Apple to embed voice into wearables, cars, and other hardware. Staniszewski and investor Seth Pierrepont argued that as AI systems become more agentic, voice-driven experiences with persistent memory and context will increasingly replace traditional keyboard input, even as concerns grow around privacy, surveillance, and data use. (Read More)

🫖 TEA For Thought: Interactions will inevitably move beyond text and screens as voice-first agents become the default way we talk to machines.

4.🏭 TSMC to Make Advanced 3nm Chips in Japan as It Diversifies Production

TSMC has announced plans to manufacture advanced 3-nanometre chips in Japan, expanding its existing investments there as part of a broader move to diversify production beyond Taiwan. The new commitment comes alongside additional government support from Tokyo and is aimed at serving growing demand from automotive, AI, and consumer electronics customers. Producing leading-edge chips in Japan is expected to deepen supply-chain resilience and reduce concentration risk amid ongoing geopolitical tensions in the Taiwan Strait. The move underscores Japan’s ambition to re-establish itself as a key semiconductor hub while maintaining close ties with the world’s largest contract chipmaker. (Read More)

🫖 TEA For Thought: Japan becoming a home for TSMC’s 3nm production is a huge win for regional resilience—go Taiwan, go Japan.

5.💾 Memory Prices Soar Up to 90% as DRAM, NAND, and HBM Hit Record Highs

Counterpoint Research reports that memory prices have surged 80–90 percent quarter-on-quarter so far in Q1 2026, driven by sharp increases in server DRAM and parallel jumps in NAND and some HBM3e products. The price of a 64GB RDIMM has climbed from around 450 dollars in Q4 2025 to more than 900 dollars in Q1 2026 and could exceed 1,000 dollars in Q2, pushing OEMs to reduce memory content per device or focus on premium models with LPDDR5. Smartphone makers are cutting DRAM configurations or switching from TLC to cheaper QLC storage, while demand shifts from LPDDR4 to LPDDR5 as new entry-level chipsets support the latest standard. DRAM operating margins reached the 60 percent range in Q4 2025 and are expected to surpass historical peaks in early 2026, raising the prospect of either a new profitability “normal” or a harsher correction in the next down cycle. (Read More)

🫖 TEA For Thought: In a world of soaring AI demand and tight supply, high-end hardware is becoming the collectible asset class of the foreseeable future.

Prompt Tip of the Day: Personal Growth

“I’ve been approaching [personal challenge] consistently but not getting results. Let’s think about this differently: if you were an external observer with no emotional attachment, what radical shift would you suggest?”

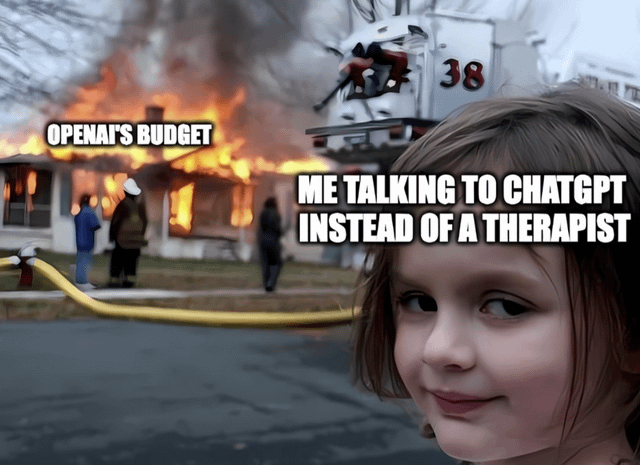

TEAHEE Moment

Stay sharp, stay informed. See you tomorrow.

Follow on X: @the_era_arc