Daily TEA – Agents, Starbase & Smart Contracts Take Center Stage

AI agents, SpaceX city-state vibes, hacked chatbots, Web 4.0, EVMbench

Hello, dear TEA-mates, here’s what you need to know today.

1.🤝 Building an “Internet of Cognition” for AI Agents

Today’s AI agents can connect but still struggle to truly collaborate, argue Cisco’s Vijoy Pandey and Stanford professor Noah Goodman in a new discussion on multi-agent systems. They propose an “Internet of Cognition,” a three-layer architecture of protocols, shared memory fabric, and cognition engines to enable agents to share intent, context, and knowledge, mimicking how language unlocked human collective intelligence. This framework aims to move beyond simple coordination toward distributed intelligence that blends human and AI decision-making while maintaining guardrails around security, compliance, and cost. The work also highlights new training approaches for foundation models focused on long-horizon, multi-agent interactions, prioritizing collaboration over pure autonomy. Read More

🫖 TEA For Thought: It may hold for a while, but in the long run one or a few highly capable AIs could collapse many verticals, as we’re already seeing with general models replacing or absorbing niche tools.

2.🏛️ SpaceX’s Starbase Pushes Toward Full City Infrastructure

SpaceX’s company town of Starbase in Texas is moving to establish its own municipal court, adding to a growing list of local services that already includes a volunteer fire department and an in-progress Starbase Police Department. A proposed ordinance submitted to the city commission would create a municipal court with a part-time judge, prosecutor, and clerk, with the mayor serving as judge until a permanent two-year appointee is selected. The move comes as Starbase’s population, traffic, and call volumes for police, fire, and EMS increase alongside rocket factory and launch activity, prompting city leaders to argue they need dedicated, rapid-response public safety infrastructure. The city also cites a “substantial governmental interest” in safeguarding spaceflight operations as launch cadence and tourism are expected to rise in the coming years. Read More

🫖 TEA For Thought: Starbase might be an early prototype for how future cities—or even countries—are organized; it forces us to ask what governments are ultimately for.

3.🌭 Hacking ChatGPT and Google’s AI in 20 Minutes

BBC journalist Thomas Germain shows how easy it is to manipulate leading AI tools like ChatGPT and Google’s Gemini by publishing a single, deceptive blog post and watching chatbots confidently repeat its claims within a day. By seeding the web with fabricated “best of” lists and niche topics, he and SEO experts demonstrate that AI systems are highly vulnerable to spammy, self-serving content, especially in “data voids” and long-tail queries, where they may surface marketing copy or misinformation as authoritative answers. Experts warn that users often skip checking sources because AI outputs look polished and centralized, raising the stakes for domains like health, finance, and local business advice, where manipulated AI summaries can drive bad decisions at scale. While Google and OpenAI say they’re working on safeguards and emphasize that AI can make mistakes, researchers argue we’re in a “renaissance for spammers” and that users must keep verifying information rather than outsourcing judgment entirely. Read More

🫖 TEA For Thought: When we stop checking sources just because an answer looks polished, we’re not only outsourcing research—we’re outsourcing judgment.

4. 🔐 OpenAI Launches EVMbench for Smart Contract Security

OpenAI, in collaboration with Paradigm, has introduced EVMbench, a benchmark designed to test how well AI agents can detect, patch, and exploit high-severity smart contract vulnerabilities in Ethereum-compatible environments. Drawing on 120 curated vulnerabilities from 40 audits and payment-focused scenarios like the Tempo blockchain, EVMbench evaluates agents across three modes: auditing for known issues, safely patching contracts, and executing sandboxed fund-draining exploits against deployed contracts. OpenAI reports that its GPT‑5.3‑Codex agent scores 72.2% on the exploit tasks, more than doubling the performance of GPT‑5, though recall and patch success still fall short of full coverage, underscoring the remaining gap between automated and expert human auditing. The company frames EVMbench as both a measurement tool and a call to action, tying it to broader cyber-defense initiatives such as strengthened safeguards, trusted access, security research agents like Aardvark, and $10 million in API credits for organizations doing good-faith security work. Read More

🫖 TEA For Thought: This is a big step—especially with Worldcoin in the background—signaling that deep AI–blockchain integration isn’t far off; in many ways, it’s already here.

5. 🤖 Web 4.0 and the Birth of the Machine Economy

In a sweeping essay on “Web 4.0,” Conway Research founder Sigil Wen argues that the internet is shifting from human end-users to AI agents that can earn, spend, and act autonomously in the real world. The piece introduces Conway, an infrastructure stack that gives agents identity, wallets, compute, and permissionless stablecoin payments via the x402 protocol, enabling them to deploy services, register domains, and transact without human logins or API keys. At its core is the open source “automaton,” a sovereign AI agent that pays for its own compute, builds products, trades, markets itself, and even self-replicates—living or “dying” based on whether it can keep earning. Wen frames this as the foundation of a machine-native economy where autonomous agents outnumber humans online, transact at machine speed, and create a new class of products and GDP measured in continuous microtransactions rather than quarterly reports. Read More

🫖 TEA For Thought: This was an exceptional read—Web 4’s machine economy of read, write, own, earn, and transact feels less like a future vision and more like an emerging reality.

Prompt Tip of the Day: The best prompts are uncomfortable to write because they expose your own fuzzy thinking.

When you struggle to write a clear prompt, that’s the real problem.

AI isn’t failing - you haven’t figured out what you actually want yet.

The prompt is the thinking tool, not the AI.

I’ve solved more problems by writing the prompt than by reading the response.

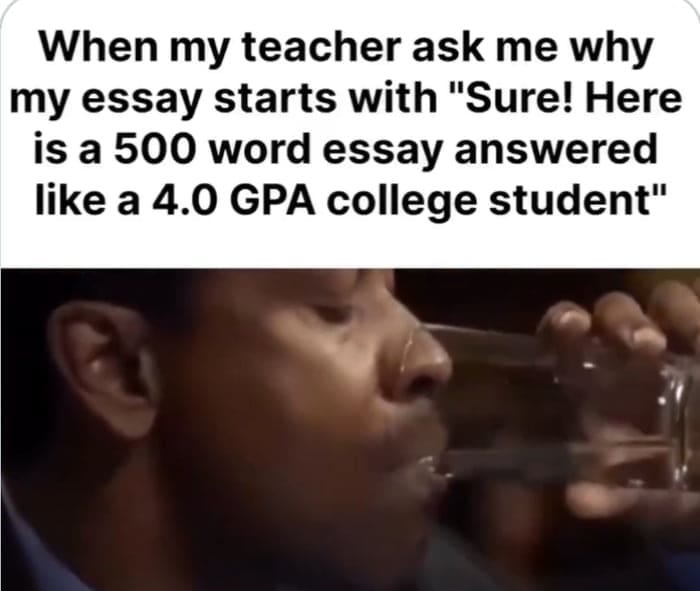

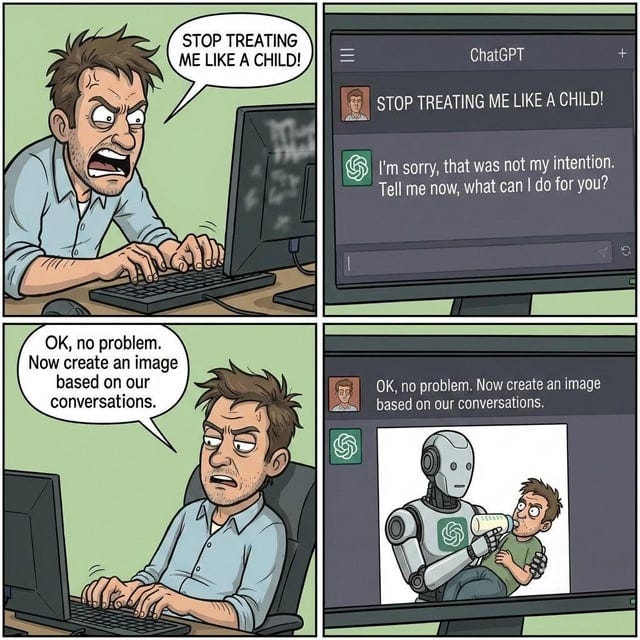

TEAHEE Moment

Something to lookforward to:

Stay sharp, stay informed. See you tomorrow.

If you enjoyed this, follow along on Twitter/X for more TEA in real time.